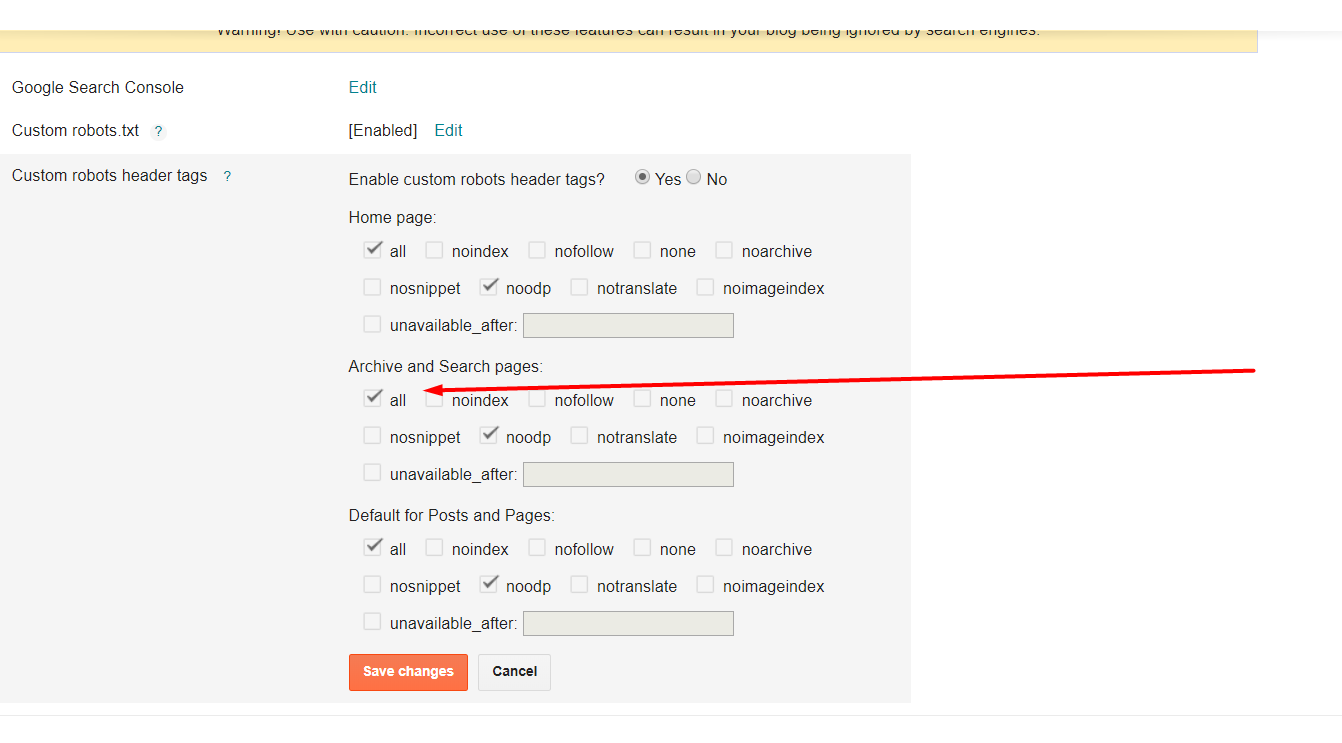

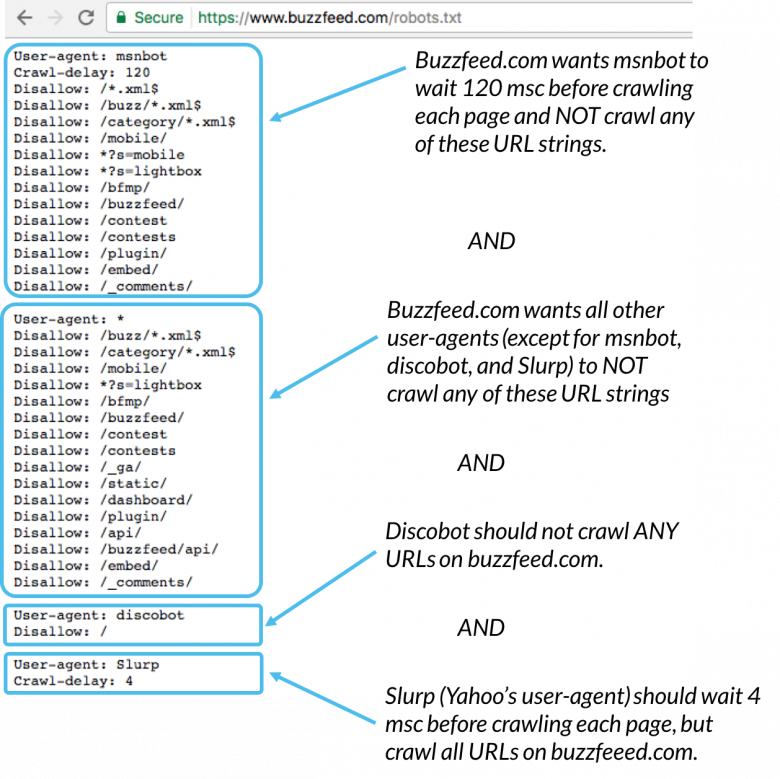

Some companies already do this, Airbnb has an advert in their robots.txt file:Īvvo.com has an ASCII drawing of Grumpy Cat: If someone does come and have a look at your robots.txt file then it might make them smile. What is a Robots.txt File? (An Overview for SEO + Key Insight): A no-fluff video on different use cases for robots.txt.# _ # ( ) # |>| # _/=\_ # //| o=o |\\ # \=/ # / / | \ \ # Learn about robots.txt files: A helpful guide on how they use and interpret robots.txt. And there’s less chance of a disaster happening (like blocking your entire site). Outside of those three edge cases, I recommend using meta directives instead of robots.txt. There are also edge cases where you don’t want to waste any crawl budget on Google landing on pages with the noindex tag. Like I mentioned earlier, the noindex tag is tricky to implement on multimedia resources, like videos and PDFs.Īlso, if you have thousands of pages that you want to block, it’s sometimes easier to block the entire section of that site with robots.txt instead of manually adding a noindex tag to every single page. Why would you use robots.txt when you can block pages at the page-level with the “ noindex” meta tag? We also use robots.txt to block crawling of WordPress auto-generated tag pages (to limit duplicate content).

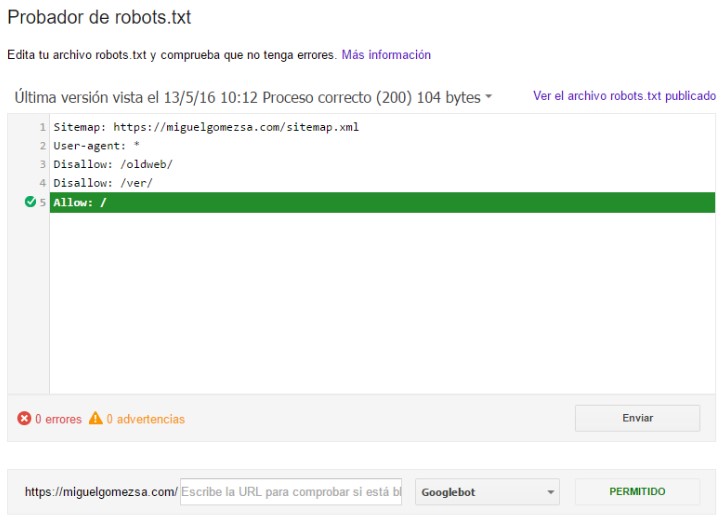

This helpful guide from Google has more info the different rules you can use to block or allow bots from crawling different pages of your site.Īs you can see, we block spiders from crawling our WP admin page. This is just one of many ways to use a robots.txt file. The “*” tells any and all spiders to NOT crawl your images folder. You can also use an asterisk (*) to speak to any and all bots that stop by your website. This rule would tell Googlebot not to index the image folder of your website. User-agent is the specific bot that you’re talking to.Īnd everything that comes after “disallow” are pages or sections that you want to block. Your first step is to actually create your robots.txt file.īeing a text file, you can actually create one using Windows notepad.Īnd no matter how you ultimately make your robots.txt file, the format is exactly the same: If the number matches the number of pages that you want indexed, you don’t need to bother with a Robots.txt file.īut if that number is higher than you expected (and you notice indexed URLs that shouldn’t be indexed), then it’s time to create a robots.txt file for your website. You can check how many pages you have indexed in the Google Search Console.

The bottom line? Robots.txt tells search engine spiders not to crawl specific pages on your website. However, meta directives don’t work well for multimedia resources, like PDFs and images. Prevent Indexing of Resources: Using meta directives can work just as well as Robots.txt for preventing pages from getting indexed. By blocking unimportant pages with robots.txt, Googlebot can spend more of your crawl budget on the pages that actually matter. Maximize Crawl Budget: If you’re having a tough time getting all of your pages indexed, you might have a crawl budget problem. This is a case where you’d use robots.txt to block these pages from search engine crawlers and bots. But you don’t want random people landing on them. For example, you might have a staging version of a page. That said, there are 3 main reasons that you’d want to use a robots.txt file.īlock Non-Public Pages: Sometimes you have pages on your site that you don’t want indexed. That’s because Google can usually find and index all of the important pages on your site.Īnd they’ll automatically NOT index pages that aren’t important or duplicate versions of other pages. Most websites don’t need a robots.txt file. Most major search engines (including Google, Bing and Yahoo) recognize and honor Robots.txt requests.

Robots.txt is a file that tells search engine spiders to not crawl certain pages or sections of a website.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed